AI: Humanity's Doom or Salvation? A Polemic with Professor Zybertowicz

On the Horyzont AI Discord, where a community interested in using and developing AI-based tools gathers, my attention was drawn to a presentation by Professor Andrzej Zybertowicz, in which he laid out a catastrophic vision of artificial intelligence development. At first, I was reluctant to watch the material. I had already heard plenty of pessimistic visions. However, my colleagues on Discord effectively encouraged me, and I listened to the professor’s entire speech. Below is my response to the charges that the professor levels against the techno-optimistic worldview, which I subscribe to. Update from June 6, 2024 – the professor responded to my polemic, and I have already replied to his response. If you are interested in the further debate, after reading the material below I encourage you to follow this thread on the X platform – https://x.com/Maciej_M/status/1787578065767321650

Speculation

Nobody – neither I, nor Professor Zybertowicz, nor Sam Altman, Elon Musk, or anyone else in the world – knows what the future will look like in general, and in particular the future in the context of artificial intelligence development. All considerations on this topic, especially mine, are therefore deliberations about what is more or less probable. I am aware of many risks, including those mentioned by the professor. However, I believe that the vision presented by techno-pessimists is not only less probable, but that slowing down technological development is harmful to the well-being of humanity. I will justify this, at least partially, by addressing the professor’s statements.

Although I believe that implementing the demands of techno-pessimists would be harmful to humanity, I also know that a certain dose of pessimism is necessary. It is worth confronting one’s convictions with opposing views in order to verify and revise one’s positions. For this reason, I am glad that Professor Zybertowicz voices his beliefs. However, I feel obligated to present counterarguments that reveal both sides of the coin.

The Polemic

Bold text represents the professor’s statements, reproduced more or less accurately.

We will live in a world of conflicts, some of which will escalate into wars.

It seems that the professor confused the tense in the above statement. Instead of “we will live,” it should be “we have lived and we live.”

The development of human civilization is a string of regular conflicts, including wars, which have repeatedly led to the extinction of entire cultures. The statement that we will live in a world of conflicts adds nothing new to the description of reality. With technological development, the weapons used to wage wars have changed. Once people fought with knapped stones, later with bows and muskets, today autonomous drones and cyberattacks are being deployed, and in the not-so-distant future it will be fully autonomous war machines. Weapons have always been lethal and terrifying in close encounters. In this regard, nothing changes. Let us also not forget the ultimate weapon, developed several decades ago and already used twice – the atomic bomb.

“AI will be the custodian of human nature” is a flawed assumption, because there is no such thing as human nature.

Regardless of how we define human nature, or even if we abandon the definition altogether, acknowledging that we are too complex to create and apply such definitions, there is no obstacle to stating that a higher form of intelligence can care for a lower one. On exactly the same principle, we care for dogs, for example. There are disgraceful exceptions – people who, out of greed or sick satisfaction, kill or torment animals. Someone might ask – what if AI becomes greedy or sick? The answer to that question can be found a bit further below.

Joseph Aloisius Ratzinger, later Pope, predicted in his book what the digital revolution would lead us to – treating the individual as a set of numbers.

It was not the digital revolution that led us to the objectification of the human being, but rather humans themselves brought this about, and not during the digital revolution – this process has been ongoing since the dawn of history. The larger the population and the structures of social organizations, the less significant the basic element of those organizations – the individual – becomes. From the perspective of those managing these organizations (political governments, corporate boards), a single human being is a number regardless of whether that number is recorded on clay tablets or on a blockchain.

The emergence of artificial intelligence is a complete reversal of the message to make the Earth subject to us.

Quite the opposite. Artificial intelligence is the best tool we have ever created to make the Earth subject to us. Not merely subject, but subject in the best possible sense of the word. That is, so that the Earth simultaneously serves us and is not devastated by our existence. In what way does artificial intelligence have the potential to help us make the Earth a better place for our development? For example, through the analysis of large datasets concerning the state of forests, waters, soils, temperatures, atmospheric composition, and so on. AI trained on such data can detect correlations invisible to classical analyses. We strongly hope that AI will also contribute to new scientific discoveries that will accelerate our escape from fossil fuel energy sources. One such technology that humanity is working on, which could provide practically unlimited energy resources, is nuclear fusion.

It is hard to predict what scientific discoveries await us thanks to artificial intelligence, but logic suggests that such discoveries are inevitable, and the question is not whether, but when.

The emergence of artificial intelligence is a complete reversal of the Enlightenment message – that is, to understand the world.

Quite the opposite. Assuming that we will create a superintelligence – and it is precisely such an assumption that underlies the professor’s defeatism – it seems logical to assume, as I mentioned earlier, that such a superintelligence would be capable of making new scientific discoveries that exceed the capabilities of the human mind. With the help of superintelligence, humanity has a decidedly greater chance of better understanding reality.

The professor mentions that AI systems are black boxes.

Black-box systems – that is, systems whose operations are difficult or impossible to trace in detail – are, above all, human brains. Just as we cannot trace the exact workings of deep neural networks, we cannot trace the exact workings of the human brain.

Creating another black-box system, but one significantly more intelligent than the human one, does not mean that humanity faces doom. What awaits us is a stronger intelligence, but whether it will be safe or dangerous for us does not depend on whether it is transparent.

Furthermore, it is hard to assume that these systems will certainly remain black boxes. It is not out of the question that precisely thanks to AI, we will finally be able to penetrate the complexity of neural networks, both artificial and biological. Perhaps it will be thanks to AI that we will understand brains, both biological and digital.

No fewer than 10% of researchers claim that there is a serious risk that AI will eliminate humans.

The professor, however, did not indicate what arguments underpin these fears. Since these arguments are absent, I assume that at least some, if not the majority, of these fears are the same ones I am addressing here.

At the same time, the professor invoked a comparison: aircraft manufacturers would not release a model for which there was a 10% risk of catastrophe. The professor thus assumed that someone actually calculated this 10% for AI. However, it is unknown who calculated it or how. Personally, I doubt such a calculation exists, because I believe it is incalculable.

Techno-enthusiasm is the most dangerous ideology.

I understand that this statement is based on the arguments I am debating here. If so, I do not feel alarmed. With all due respect, the professor’s statement makes about as much sense as a priest during Mass declaring that not going to church is evil.

In other words, the professor believes in something and preaches that belief. However, it is primarily a belief, not conclusions derived from facts, which is what I am trying to demonstrate here. Much like the Church, which has repeatedly hindered the development of science, though it has also accelerated it many times, the esteemed professor takes on the role of a preacher proclaiming a defeatism that hampers progress.

AI carries systemic risk, and conclusions must be drawn from this.

Systemic risk is a concept that, in short, refers to threats affecting the entire system of human civilization. The professor chose to omit a very important fact in this matter: systemic threats have accompanied us for centuries and are not limited to a single area, but extend across many domains of our lives.

Examples of current systemic risks can be found in medicine, where work with viruses and bacteria can lead to unpredictable consequences. Physics also carries risks, particularly in the field of nuclear energy. In chemistry, the creation and study of new chemical compounds can pose potential threats. Even economics, with its global financial crises, also constitutes a systemic risk. And the quintessential example of systemic risk, one that has accompanied us since the dawn of history, is politics with its wars and authoritarian regimes.

Systemic risks are nothing new; they have always been an element of our civilization, and people have always found ways to prevent them from leading to the annihilation of all humanity.

The professor claims that techno-enthusiasts create a smokescreen that prevents humanity from calmly examining the condition of civilization.

In what way do techno-enthusiasts prevent the professor or anyone else from examining anything? Everyone has the full right to conduct any necessary analyses, with respect to property rights. Here we have good news: Big Tech is moving toward open-source AI. Examples include Meta and X, which have already released their models, and anyone can download and analyze them for free.

What distinguishes digital technologies from other technologies? The fact that they can be copied infinitely, and this poses a threat to humanity.

While digital technologies significantly facilitate and accelerate copying, we have an example of another technology that copies on a scale unattainable by digital technology – biological replication.

No technology other than AI can replicate itself.

Microorganisms such as viruses, bacteria, and fungi, which are cultivated in laboratories, have excellent replication capabilities. Fundamentally, all biological life can in a sense be viewed as a technology developed by evolution. A technology that humanity has only recently begun to apply and manipulate.

Digital technologies, unlike others, permeate all layers of social life.

Technologies that permeate all layers of social life include manufacturing, transportation, energy, educational technologies, and even monetary exchange, assuming that too is a technology. All of these technologies permeate various aspects of social life, influencing how we work, communicate, travel, heal, learn, and function as a society.

Techno-enthusiasm is irresponsible thinking.

Quite the opposite – it is an approach that promotes the growth of human well-being. Techno-enthusiasts, or techno-optimists, advocate for developing technologies as quickly as possible so that we can defeat diseases, hunger, and wars, and protect humanity from extinction. Technological development is the primary factor driving human well-being, as measured, for instance, by average life expectancy.

Techno-pessimism, on the other hand, is the opposite trend – it is a drive to slow down the development of technology which, as I have indicated above, is the key factor in the growth of human well-being. Techno-pessimism is looking into the eyes of a child dying of cancer and telling them – we are not doing everything we can to help you, because we have terrifying visions of the future.

Techno-enthusiasts are a poorly paid army thanks to which corporations evade regulations.

Following such rhetoric, one could say that techno-pessimists are a poorly paid army deployed by corrupt politicians and interest groups who restrict competition by hindering the development of technologies that disrupt the status quo.

Democracies provide a minimal level of control over those in power.

Exactly such a level should be maintained – minimal.

Techno-pessimists would like to extend this control far beyond that minimal scope, thereby putting the brakes on humanity’s further development. More regulations, more bureaucracy, more officials, more wasted money – these are the consequences to which the demands of techno-pessimists lead.

A structural poisoning of the infosphere is taking place.

The current poisoning of the infosphere, despite its severity, does not compare to the effects of past poisonings perpetrated by the Church and authoritarian regimes such as the dictatorships of Hitler and Stalin. Those historical cases were far more destructive, as any attempt at correction or the dissemination of facts was met with brutal resistance from those in power. This resistance often ended in the death of those who dared to speak out – earlier at the stake and on the breaking wheel, later most commonly by bullets.

Today’s poisoning of the infosphere, while problematic, takes place in an environment of free speech. This freedom guarantees that any potentially harmful information can be quickly neutralized by appropriate corrections.

The professor was asked twice why he is pessimistic about controlling AI development, given that humanity manages to control biological weapons.

Despite being asked twice, the professor did not provide an answer, redirecting his response to the much easier ground of chemical weapons. Chemical weapons, however, are an entirely different type of weapon, because the molecules of poisonous gas do not multiply, unlike viruses or bacteria.

A catastrophe caused by AI is needed – one that is neither too strong nor too weak – to show humanity that AI development should be slowed down.

This statement is very naive, considering the fact that since the dawn of history there has been virtually no century without precisely such catastrophes – neither too weak nor too strong. These catastrophes are wars.

Once, entire civilizations perished in wars. In the history of recent centuries, millions have died in wars. The machinery of World War II produced a scalable technology of genocide – extermination camps.

What have these catastrophes taught us? As we can all see – nothing. New wars break out almost at the snap of a finger of one madman or another holding the reins of power.

The professor presents an example that AI, when asked how to reduce the maintenance costs of its own infrastructure, would conclude that one way is to drain the oxygen from the atmosphere in order to reduce the rate of corrosion of that infrastructure. Reducing oxygen would destroy human civilization.

This example is absurd because it assumes the absence of appropriate safeguards that humanity always implements when creating new, risky technologies. Here are a few examples:

- During work on the Manhattan Project, the most brilliant scientists analyzed the risks associated with conducting a nuclear test. A consensus was reached among leading minds that the risk of negative consequences from this test was acceptable and that the implemented safeguards were sufficient.

- In research on viruses or bacteria that could pose a threat to humanity, maximum safety measures are employed to prevent their release from the laboratory. Although failures do occur, they demonstrate the imperfection of existing safeguards, not a disregard for risk through the absence of such safeguards.

- The professor himself gave the example of chemical weapons, whose use was restricted due to difficulties in controlling the spread of gas under changing atmospheric conditions. As a preventive measure, the decision was made to cease using this technology.

One could enumerate infinitely many such examples. The self-preservation instinct is biologically encoded in humans, and the greater the risk associated with a given action, the more strongly this instinct influences our caution.

AI could eliminate humanity.

Setting aside the self-preservation instinct of humans and the resulting caution, which I wrote about above, it is worth pointing to two additional issues here.

First, to such a statement one can respond that humanity, as the most intelligent form of life, could also annihilate all life on Earth. The facts, however, are that out of the millions of species that exist on our planet, humanity has driven a relatively negligible number to extinction. Moreover, the species that have gone extinct did not disappear because humans deliberately sought to eradicate them, but as a result of irresponsibility and thoughtlessness, mainly related to excessive hunting. These were disgraceful exceptions to the principle of protecting the diversity of life, which is recognized and practiced by nearly all of humanity.

Given that even humans, with their limited intelligence and propensity for greed, can appreciate and respect the necessity of protecting life on Earth, it seems logical to assume that a more advanced intelligence would understand this even better. This applies especially to artificial intelligence, which learns about the world and develops exclusively thanks to its diversity. This diversity – and therefore the multitude of species, including the diversity of human minds and the thoughts generated by those minds – constitutes the source of information for artificial intelligences. Information is the primary and sole “nourishment” of artificial intelligences. A diverse world produces decidedly more information than a homogeneous one. Therefore, it is logical to conclude that artificial intelligence independent of humans will have a natural need to increase this diversity. Increasing diversity means fostering the flourishing of life in its various forms.

The professor, describing the young and talented engineers at DeepMind, points to their lack of social experience, as a result of which they do not realize they are playing with matches in a powder magazine.

The professor again seems to ignore the facts: the technological and scientific development of human civilization, throughout its entire history, has been built on the shoulders – or rather on the minds – of precisely such people. It is the strongest minds, “blinded” by science and ceaselessly searching for solutions to diverse scientific and engineering problems, immersed in their work, who forget or push aside the everyday and extraordinary problems of the world. It is thanks to the intense concentration of their minds on specific problems that these people achieve breakthrough discoveries, thanks to which human life continuously improves.

The engineers at DeepMind are precisely such people. Their role is not to think about the catastrophes that might be triggered by the deployment of the technologies they develop. We do not want to disturb these engineers. Quite the opposite – we want to give them maximum freedom of research. The responsibility for ensuring that this research does not lead to catastrophe should fall not on the scientists and engineers, although they too have their self-preservation instincts, but on corporate management and governments, through the creation of appropriately secured laboratories and procedures. Yes, a certain degree of procedures and regulations is necessary. I am absolutely not an advocate of zero oversight.

Summary

In closing, I believe that the professor certainly has good intentions, but implementing the views he espouses would be harmful to humanity. Hindering the development of technology means slowing the search for cures to civilization’s greatest afflictions, such as disease, poverty, and war. While the professor tells catastrophic visions of the future, today children are dying of incurable diseases or from hunger, corruption and greed are ravaging society, and wars are destroying the world. Humanity needs technological development as fast as humanly possible. Oversight of this development is necessary, but the degree of that oversight should be as minimal as possible. Speed of development should be simultaneously maximal and safe. Maximality, safety, speed, caution – these are all concepts that each of us understands in our own way. Reality is the resultant of these diverse interpretations and the influences of interest groups.

Read more about ATS here.

DISCOVER ELEMENT!

Maciej Michalewski

CEO @ Element. Recruitment Automation Software

Recent posts:

The Great Reorg: companies are rebuilding around AI

Foundation Capital interviewed 25 companies and found that AI is not just speeding up work — it is forcing organizations to rebuild from the ground up around four human roles.

Gallup 2026: the workplace is cracking and managers lose drive faster than the rest

Gallup State of the Global Workplace 2026: engagement fell to 20%, managers lose drive faster than their teams, and the AI paradox is clear: personal productivity rises, organizational does not.

A Polish-founded robot that drives screws faster than humans

A Polish-founded robot that drives screws faster than humans When people think about automation, they usually picture chatbots, image generators, and coding assistants. Meanwhile, AI

People prefer AI poetry over Shakespeare

A study published in Scientific Reports (Nature) produced results that should interest anyone who thinks human creativity is easy to tell apart from machine output.

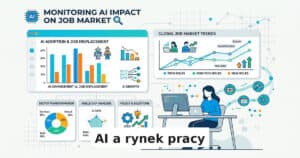

Three tools that measure AI’s impact on the job market

The debate about AI’s impact on the job market has been going on for years, but it only recently moved past the “experts predict” stage.

Poland’s job market: 238,000 offers and the specter of jobless recovery

In February 2026, Polish employers posted 238,000 job offers – 7% fewer than last year. Analysis of the Grant Thornton report and Element CEO commentary.