Is Artificial Intelligence Truly Intelligent?

What Will You Learn from This Article?

This article is quite extensive, so there is a great deal to learn from it. The main goal is to answer the titular question: is AI truly intelligent? In pursuit of this answer:

- you will explore historical and modern definitions of intelligence

- you will learn how artificial intelligence is created, what machine learning is, what language models are, and what neural network parameters mean

- you will examine the topic of intelligence from original perspectives that suggest intelligence may exist even beyond biological or artificial neural networks

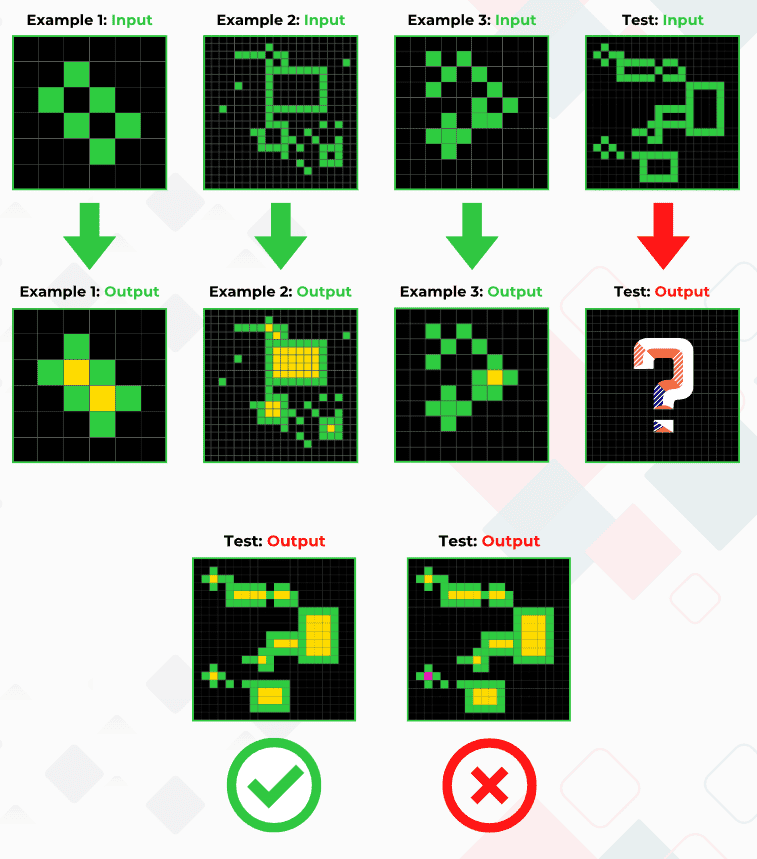

- you will see a fascinating intelligence test that the average human performs far better at than the best AI

Why I Wrote This Article

First and foremost, I wanted to organize my own knowledge about intelligence. I have been passionate about technology for years and have been working on implementing artificial intelligence to automate recruitment processes. My goal is to integrate AI into an ATS system in a way that makes recruiters supervisors of the process and frees users of recruitment systems from tedious clicking.

Furthermore, I want people to understand artificial intelligence as well as possible, because it is a groundbreaking technology that increasingly permeates our lives. This permeation sometimes causes concerns — for some people even existential ones. These concerns may stem, among other things, from a lack of understanding of AI technology. With this article, I will try to address that gap.

Definitions of Intelligence and Glossary of Terms

To the question “Is artificial intelligence truly intelligent?” the answer must be: “It depends on the definition of intelligence you adopt.”

Scientists disagree about what intelligence actually is. In this article, I will present several definitions that I find simultaneously interesting, distinct, and useful for examining the problem from different perspectives. I also present below a glossary that explains the technical terms appearing throughout the article.

Definitions of Intelligence and Glossary of Terms

- Machine learning — a category of computer algorithms used to build systems (models) that learn from data.

- Learning (in the context of machine learning) — a process in which a system learns to perform tasks, such as image recognition or outcome prediction, based on data analysis. Instead of being programmed step by step (the classical approach to building computer systems), the system independently “learns” to solve problems using examples we provide.

- Model — a system architecture, along with its parameter configuration, created through machine learning. An example of a model is GPT-4o, used in ChatGPT.

- Language model — a model specialized in using natural language, e.g., ChatGPT, Claude, and many others.

- Model parameters — in simplified terms, a model is a gigantic collection of numerical matrices. Gigantic means these matrices are stored as billions of numbers. Imagine a printed Excel spreadsheet where each cell is a 1 cm square. The GPT-4 model used in ChatGPT has roughly 1.8 trillion parameters, and its “spreadsheet” would cover the area of a football pitch. Each cell in that spreadsheet contains a number, and that number is a model parameter.

- GPT — Generative Pre-trained Transformer — an artificial intelligence model used in ChatGPT, among other applications. It uses so-called transformers, through which the model applies an attention mechanism. Thanks to the attention mechanism, GPT models can understand the meanings of words and the relationships between words in different contexts. The invention of transformers and the attention mechanism was a breakthrough in the development of artificial intelligence.

- Prompt — a query, in the form of text, voice, document, image, or audio, directed at an artificial intelligence in order to obtain a response.

- Hallucinations — when a model provides responses containing false information or implausible predictions. Hallucinations result from the fact that models operate on a probabilistic basis. This means they try to provide the answer whose correctness is most probable. However, the most probable answer does not always mean it is the correct answer.

- Multimodality — a model’s ability to process text, images, and audio.

Note: I will sometimes use the terms artificial intelligence, model, and ChatGPT interchangeably, without going into the details of the differences between these concepts. For example, ChatGPT is an application that uses various GPT models, and therefore various artificial intelligence models. ChatGPT itself is an application, an interface through which we use these models. A detail-oriented person might say that ChatGPT is not artificial intelligence, and they would be right. I am aware of this, but I am deliberately simplifying the message to make the article accessible to people less familiar with the technical aspects of this topic.

The History of Intelligence and Its Definitions

The concept of intelligence has evolved throughout history and has been shaped by thinkers and researchers from ancient times to the present. Initially, it was defined as the ability to understand, comprehend, and acquire knowledge. In the Middle Ages, intelligence was associated with the soul and angels, while during the Enlightenment it was seen as a tool for acquiring knowledge and driving progress.

In the 19th century, scientific psychology was born, and it began to study intelligence more systematically. Alfred Binet created the first IQ tests, which aimed to measure children’s intelligence. In the 20th century, the concept of intelligence expanded to include new areas, such as creativity, emotional intelligence, and social intelligence.

Modern definitions of intelligence encompass a wide range of abilities, such as:

- Learning and acquiring knowledge

- Problem-solving and decision-making

- Abstract and logical thinking

- Planning and predicting

- Language and communication

- Creation and innovation

- Adaptation to new situations

- The ability to generalize.

There is no single, universally accepted definition of intelligence. Different researchers emphasize different aspects of this complex trait. There are also many different theories of intelligence that attempt to explain how it works and how it develops.

With such a broad understanding of intelligence, it is important to remember that intelligence is not a one-dimensional trait. People can have different strengths and weaknesses across different areas of intelligence. There are many factors that influence intelligence, including genetics, environment, and upbringing.

Research on intelligence is still ongoing, and there is much we still do not know about this fascinating ability. Here are some example definitions of intelligence that emerged before the current technological revolution associated with AI:

- Charles Spearman (1904) introduced the concept of the “g factor” (general intelligence), suggesting that intelligence is a single general ability that influences performance in all cognitive tasks.

- Alfred Binet and Theodore Simon (1905) stated that intelligence focuses on the ability to understand, evaluate, and reason. Binet and Simon created the first intelligence test, which was designed to identify children who needed additional educational support.

- David Wechsler (1944) defined intelligence as the global capacity of the individual to act purposefully, think rationally, and deal effectively with the environment. He is the creator of popular intelligence tests such as the WAIS and WISC.

- Howard Gardner (1983) proposed the theory of multiple intelligences, defining intelligence as the ability to solve problems or create products that are valued in one or more cultural contexts. He identified eight (later nine) types of intelligence, including linguistic, logical-mathematical, spatial, musical, interpersonal, intrapersonal, bodily-kinesthetic, naturalistic, and existential.

- Robert Sternberg (1985) developed the triarchic theory of intelligence, which includes analytical intelligence (the ability to analyze, evaluate, judge, and compare), creative intelligence (the ability to generate new ideas), and practical intelligence (the ability to deal with everyday problems).

- John Carroll (1993) created the three-stratum theory, which suggests that intelligence has three layers: the narrowest includes specific abilities, the middle layer includes broad abilities (such as memory, learning, and perception), and the deepest layer is general intelligence (the g factor).

- Daniel Goleman (1995) popularized the concept of emotional intelligence, defining it as the ability to recognize, understand, and manage one’s own emotions and the emotions of others.

Five Definitions of Intelligence in the Context of AI

As someone interested in the subject of AI, I have come across several particularly compelling definitions of intelligence. I have selected them to help answer the main question of this article — is AI intelligent? In my assessment, these definitions are not only apt but also show intelligence from different perspectives, while being significantly different from one another. Understanding how many divergent definitions of intelligence compete with each other allows us to approach the question of AI intelligence with appropriate nuance.

The five definitions I consider in seeking the answer to our question:

- Jacek Dukaj’s, who defines intelligence as the ability to operate on symbols. This is the most general definition I have encountered. As the most general, Dukaj’s definition encompasses all the other definitions presented in this article.

- Andrzej Dragan’s, who defines intelligence as the ability to find analogies. It is undeniable that finding analogies between different elements of reality (e.g., between the fact that apples fall and that celestial bodies orbit each other) requires intelligence.

- The popular definition, meaning the one I encounter most frequently and which seems to be most commonly accepted. This definition boils down to the statement that intelligence manifests itself in cognitive abilities, such as the ability to observe, learn, adapt to changing conditions, and solve problems.

- The definition of intelligence understood as the ability to acquire knowledge. I encountered this definition among software engineers who research and develop artificial intelligences. Proponents of this definition are harsh in their assessment of the intelligence of current AI systems. At the same time, this definition will be very useful for explaining two key stages of how artificial intelligences function — training and inference.

- Francois Chollet’s, who argues that intelligence is the efficiency with which you operationalize past information in order to deal with the future. I included this definition in my article because of a fascinating — and currently very difficult for AI — intelligence test based on Chollet’s definition. This test is simple even for a child, but ChatGPT and other models cannot really handle it.

Jacek Dukaj's Definition -- Operating on Symbols and the Intelligence of Life

In one of my notes from Jacek Dukaj’s talks — a brilliant writer and futurologist — I read:

Operating on symbols is the broadest definition of intelligence.

Dukaj bases his view on the assumption that the key characteristic of intelligent action is the ability to manipulate representations — that is, symbols — in order to solve problems, communicate, and create new concepts. Here is why this makes sense:

- Symbol in this context is broadly understood and can include words, images, mathematical signs, gestures, and even abstract ideas, also represented by memes. Each of these elements represents something larger, simple or complex, material or immaterial. Operating on symbols means the ability to use these representations for thinking, problem-solving, or communicating.

- The ability to operate on symbols is closely related to abstract thinking, which is the capacity to think about concepts that are not directly connected to concrete sensory experience. Abstraction enables understanding and manipulating concepts that have no physical form.

- Virtually every definition of intelligence points, more or less directly, to the ability to adapt to new situations and solve new problems. The ability to operate on symbols makes abstract thinking possible, as well as the modeling of various scenarios and solutions. This, in turn, serves as a catalyst for the process of solving complex problems.

- Another recurring aspect of intelligence is the capacity for learning, meaning the ability to change behavior or thinking based on experience. Learning is also addressed in one of the definitions presented below. Operating on symbols enables the organization of knowledge, as well as the creation and testing of hypotheses — processes that are essential from the standpoint of learning.

In summary, the ability to operate on symbols is the foundation of abstract thinking, language, problem-solving, and learning — and these are activities we consider hallmarks of intelligent behavior. Understanding intelligence therefore requires understanding the capacity to process and manipulate representations.

Returning to the titular question — are artificial intelligences, particularly language models, intelligent according to the definition of intelligence as the ability to operate on symbols? The answer is decidedly yes, because they can analyze, generate, and interpret natural language and images, which are forms of symbols.

Here is how this works, using ChatGPT as an example:

- ChatGPT can analyze texts, recognizing patterns and structures in language. This enables it to understand the meanings of words, the relationships between them, and the context, which is crucial for operating on linguistic symbols.

- ChatGPT creates new texts based on the patterns and structures it has understood. By generating sentences, paragraphs, or entire articles, it uses known symbols (words) and combines them into a coherent whole, which is an example of advanced symbol manipulation.

- ChatGPT can answer questions, interpret user queries, and provide responses based on information encoded through words. This demonstrates its ability to understand and manipulate symbols in a way that imitates human thinking.

In my view, there is therefore no doubt that artificial intelligences such as ChatGPT can operate on symbols in a way that satisfies Dukaj’s definition of intelligence.

Fascinating Implications of Dukaj's Definition

Before I part ways with Dukaj’s ideas and move on to the next definition, I want to share two fascinating insights from this extraordinarily wise thinker.

The first insight is a vision that had also been on my mind, and I found its confirmation in Dukaj’s words.

In one of his interviews, Dukaj stated that an example of the ability to operate on symbols is DNA code. It logically follows, then, that life itself is intelligent and does not need a brain — or even a nervous system — to express that intelligence.

Quoting Dukaj:

The creation of new information or knowledge does not require a self-aware agent. In our case, there was such an agent — Homo sapiens, which developed technological civilization. But this is not a necessity. A Boltzmann brain is an extreme example — theoretically, an intelligent brain can arise spontaneously.

According to Dukaj — and I once again agree — DNA operates in an intelligent manner; it contains within itself a certain primordial element of intelligence that humanity still does not fully understand. This element is incomprehensible because to this day we are not certain how DNA arose and how it “knows” how to manipulate amino acids (which function here as symbols) to encode information for producing the appropriate proteins.

It is not the brain, not even the simplest nervous system, but life itself at the most fundamental molecular level that operates with sequences of nucleotides (A, T, C, G) as symbols encoding genetic information. The processes of DNA replication, transcription, and translation, which transform genetic code into RNA and proteins, as well as DNA’s capacity for mutation and evolution, demonstrate how life uses abstract symbols to store, process, and transmit information.

I am constantly fascinated by this concept, and under the influence of this fascination I publish posts like this on social media:

My tu gadu gadu o LLMach, a inteligentna natura tworzy czasteczkowe silniki ze sprzeglami obrotowymi, walami napedowymi i lozyskami, ktore pracuja z predkoscia 20 tysiecy obrotow na minute.

— Maciej Michalewski — e/acc (@Maciej_M) August 15, 2024

Wydaje nam sie, ze jestesmy tak zaawansowani, a w rzeczywistosci rozumiemy i potrafimy… https://t.co/2wU4f9xUG2

Screenshot: A tweet by Maciej Michalewski discussing how nature creates molecular motors with rotary clutches, drive shafts, and bearings operating at 20,000 RPM — highlighting that despite our technological confidence, we still understand very little about the intelligence embedded in nature.

Intelligence at the level of chemical molecules may be hard for some people to accept. If someone still questions the intelligence of language models like ChatGPT, they will be even less likely to accept the intelligence of DNA, or of matter in general.

However, I agree with Dukaj’s definition. I believe that life arose precisely thanks to intelligence encoded somewhere deep in the structure of reality. The incredibly complex and still-incomprehensible phenomenon of life — which we are still unable to replicate — is the fruit of some form of intelligence.

Some form of intelligence? A different kind from ours? Can other intelligences exist? To answer these questions, let us move to another fascinating reflection from Jacek Dukaj.

Here is another quote from Dukaj, recorded in my notes:

What is the probability that our understanding of intelligence is the benchmark for intelligence in general? Imagine a phase space in which we have as many dimensions as there are possible variables for constructing intelligence in general, and in that phase space we have one tiny dot, and that dot is the intelligence possessed by Homo sapiens. What is the probability that this is the optimal form of intelligence, that no other form could surpass it? If, after reaching the technological singularity, we allow our artificial intelligence to build successively better versions of itself, then logically, moving through that phase space, it will drift away from Homo sapiens intelligence toward increasingly strange and alien forms of intelligence. Otherwise, we would have to accept that the universe is anthropic — that it was created with precisely the right combination of assumptions, physical laws, elementary particles, speed of light, and so forth, solely so that the human intelligence evolved within it would be optimal. This is a kind of Copernican belief. If we do not subscribe to it, the logical consequence is that human intelligence is a random version of intelligence located on the fringes of the phase space, while the optimum lies somewhere in the center.

In other words, according to Dukaj — and again, I find it hard to disagree — if we reject the anthropic universe (in short, one created so that humanity could exist), then there may be infinitely many kinds of intelligence, and human intelligence is most likely not the most optimal. Intelligence, including artificial intelligence, may therefore develop into a more optimal and thus more powerful version of intelligence, thereby gaining an advantage over human intelligence.

In the phase space described by Dukaj, many other intelligences may exist — weaker, stronger, different, and incomprehensible. Perhaps it is precisely such an intelligence, incomprehensible to us, that created life by being able to operate on symbols at the level of fundamental matter, such as chemical molecules, or perhaps even subatomic particles. Fascinating!

Andrzej Dragan's Definition

Andrzej Dragan, a world-class quantum physics researcher who also takes a keen interest in artificial intelligence, argues that intelligence is the ability to build comparisons, recognize patterns, and find analogies. Dragan illustrated this with the example of Isaac Newton, who is recognized as one of the greatest scientists in history precisely because he was able to build an analogy between the laws governing a falling apple and the “pixels” — to quote Dragan — in the sky, meaning the stars.

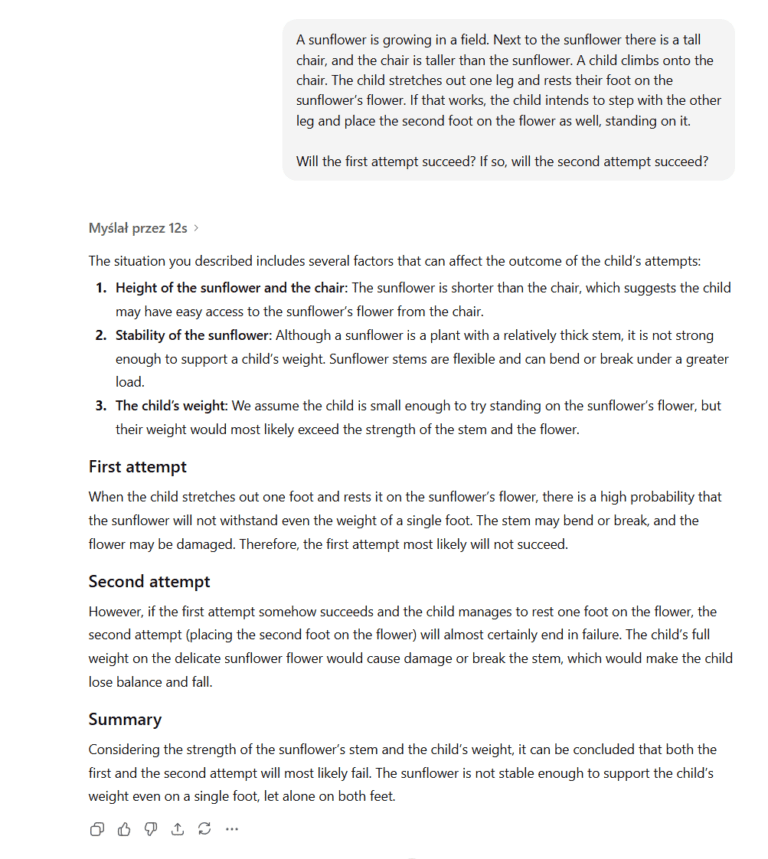

Dragan presents various examples showing that ChatGPT can apply the laws of physics to new situations it has never seen before (i.e., ones that were not in its training data). I also devised such an example, deliberately detached from reality, to make sure ChatGPT had never encountered it before. Here is the example and ChatGPT’s response:

ChatGPT correctly compared the situation described in the question with the laws of physics it already knew.

First, it analyzed the situation and evaluated the elements relevant to the question — namely, the sunflower’s height, the stability of its stem, and the child’s weight. Second, it compared the physical properties — that is, the sunflower’s structural strength against the force exerted by the child’s foot.

ChatGPT correctly infers that a sunflower, although a plant with a thick stem, is not stable enough to support the weight of a child. It provides the correct answer by taking all of the above information into account.

This is a simple example that demonstrates that these models can — sometimes better, sometimes worse — make comparisons, apply appropriate models of reality (in this case, a model of the laws of physics) to appropriate and novel situations. In doing so, they satisfy Professor Dragan’s definition of intelligence.

The Popular Definition -- Cognitive Abilities

Apart from the specialized definitions of intelligence, which include those of Dukaj and Dragan, there are commonly encountered definitions that I myself use, for example during introductory training sessions on AI.

I cited examples of these popular definitions earlier, when presenting the history of the science of intelligence. Broadly speaking, popular definitions frame intelligence as a set of cognitive abilities, such as the ability to perceive, learn, solve problems, evaluate, and reason.

The earlier example of the ChatGPT conversation about the sunflower and the child serves as evidence that this popular definition is satisfied, because ChatGPT answered my question using precisely these cognitive abilities. ChatGPT first had to learn the laws of physics, then it assessed a new situation, correctly reasoned about which physical laws to apply to this situation, and how those laws would operate in this specific case. As a result, ChatGPT correctly solved the problem in its response.

A far more impressive demonstration — one that also vividly shows the ability of perception — is the presentation of the multimodal capabilities of ChatGPT 4o. Take, for example, this short video:

Defining Intelligence by the Ability to Acquire Knowledge -- Where AI's Problems Begin

Following what is said about intelligence among machine learning engineers, I have encountered multiple times a definition that today’s AI meets only to a limited extent. This definition boils down to the statement that intelligence is the ability to acquire new knowledge.

This definition takes various forms:

- Reasoning is the process of acquiring knowledge. Intelligence is the efficiency of that process.

- Intelligence is the ability to gain knowledge.

- Intelligence is the ability to create models of reality.

- Intelligence is the ability to expand one’s horizons based on a few examples, taking into account one’s prior knowledge and experience.

- Intelligence is the ability to fill gaps in knowledge without cheating (i.e., without having prior access to that knowledge in training data) and without using statistical or perceptual shortcuts.

The common denominator of the above definitions is the ability to learn, the ability to independently acquire new knowledge based on existing knowledge and past experience, but without directly obtaining that new knowledge from external sources.

Language models such as ChatGPT possess an enormous body of knowledge, but can they use that knowledge to independently acquire new knowledge?

Before I answer this question, it is worth examining the process by which AI acquires knowledge. I will present this process through the interaction between a user and ChatGPT.

Does AI Learn Anything During a Conversation with a User?

If we type some piece of information unknown to ChatGPT into its dialog window, for example “Maciej Michalewski likes mangoes and avocados,” ChatGPT’s knowledge will not grow. What ChatGPT will do with this information is merely use it to formulate a response.

If at any other time I start a new conversation with ChatGPT and ask what fruits Maciej Michalewski likes most, ChatGPT will not know the answer and will only be able to guess at best.

This simple example proves that ChatGPT does not acquire new knowledge during conversations with users — it does not acquire it because it does not even retain the information provided in the prompt.

In summary, artificial intelligences do not store in their neural network the information we input through prompts (queries); they merely process that information within the scope of the ongoing conversation.

Acquiring New Knowledge Through Reasoning

However, even if ChatGPT actually did permanently store information provided by users, for example in some sort of cache, that would not mean it understood that information.

Acquiring new knowledge through understanding facts is fundamentally different from merely memorizing them. Understanding means perceiving relationships within information, through which we create mental patterns. We then use these mental patterns to solve new problems.

An example: a child observes that its actions affect its surroundings (a relationship between action and effect). By taking further, including new, actions, the child increasingly better predicts their consequences. The more times a child spills a glass of milk, which draws the parents’ attention, the more carefully it will handle not only the glass of milk in the future, but also other vessels.

Here is an example with ChatGPT that illustrates the difference between acquiring knowledge through memorization versus reasoning. ChatGPT knows that the Earth orbits the Sun because it encountered many pieces of information in its training data that directly describe and confirm this fact. It therefore did not need to reason to acquire this knowledge. However, if ChatGPT could discover this fact on its own the way Nicolaus Copernicus did — based on observations and calculations — then we would be dealing with the acquisition of new knowledge through a thought process, that is, through reasoning.

In the section “Does AI Learn Anything During a Conversation with a User?” I pointed out that when ChatGPT receives new information from us during a conversation, it does not memorize that information but merely processes it to formulate a response. Since ChatGPT does not memorize information, it does not acquire new knowledge through memorization. And since it does not memorize information, it certainly does not build knowledge through reasoning either, because it has not retained the information that could be the subject of its reasoning. If that is the case, then when does ChatGPT actually acquire knowledge?

Does ChatGPT Acquire New Knowledge?

It does! After all, if it did not, it would not be able to do what it does. Without knowledge about the world, it would not be able to answer all our questions — general or highly specific — from any field of knowledge, from any specialization.

So how do artificial intelligences acquire knowledge? When do they acquire it? Do they acquire it through memorization, or through reasoning? Or perhaps through both methods?

To answer this question, we must clearly distinguish between two stages of how artificial intelligences function:

- Training

- Inference (~reasoning/deduction)

Training Artificial Intelligence

Training is simply the process of teaching a model to become intelligent. It is somewhat like a child’s development (in greatly simplified terms) — a child at birth has at most the rudiments of intelligence. These rudiments, with the passage of time and learning, develop to eventually reveal the full potential of human intelligence. A ChatGPT model before training begins does not even have a rudiment of intelligence, and in response to our questions it would not produce anything sensible (at best a random string of characters).

Training takes time, costs money, and requires factories and energy

Training artificial intelligences is a process that, for models as large as ChatGPT:

- takes months,

- takes place in AI factories (imagine a hall the size of a supermarket filled with hundreds of thousands of processors enclosed in racks like those in the photo below),

- uses significant energy resources,

- costs millions of dollars.

Training Artificial Neural Networks

Artificial intelligences like ChatGPT are built on the basis of artificial neural networks. In greatly simplified terms, these networks resemble the brain’s neural networks. Just as in the brain, artificial neurons are connected to each other in a network structure (one neuron can be connected to many others). Each connection between artificial neurons is essentially a numerical value. This numerical value is called a parameter, and there are approximately 1.8 trillion such parameters — that is, connections between artificial neurons — in GPT-4. It is precisely the assignment of a specific value to each of these nearly 2 trillion parameters that constitutes training. The better the neural network’s parameters are set, the more intelligent the network becomes.

The training of artificial neural networks involves feeding a gigantic dataset into the network (imagine a large chunk of the Internet). This data is used to set the neural network’s parameters in such a way that — in simplified terms — the network understands the data, extracts the knowledge encoded within it, and acquires the ability to use that knowledge. Again simplifying, by feeding text-based training data into the neural network, the network learns to use language and simultaneously acquires the knowledge recorded in that text.

If you would like to see how an artificial intelligence is actually built — specifically the artificial neural network in which intelligence emerges — I recommend this two-part video series (totaling several dozen minutes), which uses simple examples and easy-to-understand animations to explain what artificial neural networks are, how they can manifest intelligence, and how they are trained:

Where Does the Model Store Information?

It is worth noting — and this is truly remarkable — that in artificial intelligence models, there is no such thing as a place for storing facts. An artificial intelligence does not have some encyclopedia text file inside it; it does not have a separate memory from which it retrieves needed information. The fact that an artificial neural network has no database with stored information about the world, yet still remembers all the facts it learned during training, is an extraordinary feature of artificial neural networks.

As I mentioned, during training, the model’s parameters — that is, the parameters of the neural network — take on certain numerical values. It is precisely in the relationships between these values that the model’s entire knowledge is encoded. The video material mentioned above presents this beautifully.

Training AI Is Knowledge Compression

The trillions of ChatGPT parameters are a sequence of numbers. These numbers can be written in a simple text editor, for example in .txt format. Such a file can be stored on a disk, thus preserving the enormous body of knowledge about humanity and the world encoded within it. In simplified terms, the file stores knowledge and intelligence. However, to activate this intelligence, the numbers stored in the file must be transferred to the hardware and software infrastructure that brings our AI “to life.”

As I mentioned earlier, the model is trained on a large chunk of the Internet — on texts, photographs, videos, and images. If we wanted to save all of these training multimedia to a disk, we would need disks hundreds of times larger than the disk needed to save our .txt file with parameters. Therefore, what happens during training is the compression of knowledge stored in those training multimedia. The compression of videos, music, and texts — forms understandable to humans — into a form completely incomprehensible to us: a sequence of 1.8 trillion numbers. Numbers of which each individual one means nothing, but all together, recorded in the structure of a neural network, create intelligence. Similarly, a single brain neuron separated from its network means nothing, but the entire brain is intelligent.

This fact of data compression during training has been emphasized numerous times by Professor Dragan in his many discussions about artificial intelligence. Dragan also cited the Hutter Prize competition for creating the most effective data compression algorithm. The prize is 5,000 euros for each percent of compression improvement, with a total prize pool of 500,000 euros.

Reasoning During Training

Returning to the heart of the matter — unlike the inference stage (which I will discuss shortly), the training stage involves reasoning. Let me recall one of the definitions given above: reasoning is the process of acquiring knowledge.

During training, the model takes in training data and extracts from it not only facts but also patterns, relationships, and rules governing those data (understanding of facts). Facts and their understanding constitute the model’s new knowledge, and the model retains this knowledge by assigning specific numerical values to its parameters.

The training data, which comes from the Internet, contains above all a picture of reality (e.g., encyclopedias, scientific papers, textbooks, news articles, and discussions on current topics). Consequently, the model’s parameters come to encode patterns of the rules governing reality, from physical theories to psychological ones. The model’s parameters also encode patterns of human thinking, which permeate the model from these data.

Patterns of human thinking? Yes. In addition to textbooks, encyclopedias, and scientific research, the Internet contains above all various forms of human-to-human communication. This communication is recorded in emails, chat histories, forum discussions and social media posts, as well as in the dialogues of films and books. All of these are diverse records of human thought, and from these records the neural network extracts patterns of our thinking — even those we ourselves do not notice.

How do we know the model actually reasons?

How do we know the model actually did all of this — learned the facts, understood the rules governing the world, and knows how humans think?

We know this because ChatGPT (and other language models) carries on meaningful conversations with us on all topics, responds like a human being (it has passed the Turing test), can read emotions, and can even simulate those emotions (I use the word “simulate,” but I do not know where simulation of emotions ends and genuine experiencing of them begins).

Moreover, AI can solve new problems by applying learned patterns and rules governing the world, which anyone can verify independently by creating examples like the child-and-sunflower scenario presented earlier.

Training the Foundation Model and Fine-Tuning

Speaking of ChatGPT’s effective communication with humans, I should mention that this very good communication is possible thanks to two-phase model training. In the first phase, the model learns patterns and relationships from enormous datasets, creating what is called a foundation model. The model is then fine-tuned in the so-called fine-tuning phase on additional data for specific communication tasks — for example, for natural language communication on virtually any topic, as with ChatGPT, or for communication within a specific specialization, such as customer service or medical advice.

Conceptual Understanding

When I wrote about understanding the content contained in training data, I noted that this understanding involves grasping the relationships between facts, the rules governing reality, and the thinking patterns hidden in that data. All of this is related to conceptual understanding.

Conceptual understanding consists of three elements:

- Abstraction — The model understands at the level of ideas and concepts, not just specific facts or procedures. Example: During training, ChatGPT learned to recognize different dog breeds. The model did not merely memorize specific images of dogs but created an abstract concept of a dog by identifying features such as ear shape, coat type, and body proportions. As a result, when it sees a new dog it has never encountered before, it can recognize it as a dog based on these abstract characteristics.

- Grasping the essence — Comprehending the fundamental principles and connections that underlie a subject. Example: A language model is trained on an enormous quantity of texts. Thanks to this, the model can grasp the essence of grammar and syntax (even if the training data did not include language rule textbooks). The model does not merely memorize phrases but understands the fundamental principles governing sentence construction. As a result, it can generate grammatically correct sentences even in contexts it has never seen before, understanding how different parts of speech relate to one another.

- Generalization — Training involves not just memorizing training data but also generalizing from it. The model learns to apply discovered patterns and relationships to new, unfamiliar data. Example: A real estate price prediction model learns from historical data on prices, locations, property sizes, and other variables. After training, the model is able to generalize this information and predict real estate prices in new locations it has never seen. It does this by identifying patterns and relationships between different variables (e.g., proximity to schools, public transit stops) and applying them to new data.

In summary, conceptual understanding, which occurs during model training, is AI acquiring knowledge through reasoning, because it indicates that the model has understood something fundamental about the data, beyond merely memorizing it.

Knowledge Acquisition During Training -- Summary

In summary, I have demonstrated above that during the training stage, artificial intelligence does indeed acquire knowledge. Consequently, the training stage satisfies the definition of intelligence understood as the ability to acquire new knowledge.

Inference -- The Second Stage of AI Operation

Inference (from the English “inference”) is the second stage of artificial intelligence operation, following training. This is the stage in which the trained model carries out the tasks for which it was created. In the case of ChatGPT, inference is simply the process of receiving questions (prompts) from users and providing answers.

Let us first clarify the concept itself: inference is a thought process in which, based on statements accepted as true, one arrives at a new assertion. The term derives from Latin and is used in various fields, such as logic, mathematics, linguistics, and computer science.

Inference does not involve further learning. During the inference stage, the AI applies what it learned during the training stage. During inference, there is no learning and no acquisition of new knowledge. During inference, the model’s parameters are not updated. The model simply applies the patterns it has learned to new input data (prompts) in order to generate output data (responses to prompts).

This is similar to a situation in which a person uses their existing knowledge to solve a problem without learning anything new in the process. It is the execution of a known strategy, not the discovery of a new one.

An Example Illustrating the Difference Between Inference and Training -- A Student During Study and Examination

Here is an example that illustrates the difference between training and inference.

Imagine a student learning to solve new mathematical problems. During their studies, they encounter these problems and seek solutions using known principles and techniques. Through reasoning, they discover new rules that enable them to solve these new problems and acquire new knowledge. This phase involves trial and error, feedback, and continuous improvement, much like a machine learning model updates its parameters during training. This, then, is the training stage.

When the same student sits for an exam, they apply the knowledge and techniques they have learned to solve new problems. They do not learn during the exam but rather use their existing knowledge to answer questions.

The same is true for artificial intelligence. At the inference stage, the model applies the patterns and rules it learned during training to new data, without further modification of its parameters (without learning).

Training and Inference -- Summary

In summary, training a model is a dynamic process that involves reasoning and knowledge acquisition. During training, the machine learning algorithm adjusts the model’s parameters based on training data. In contrast, inference is the static application of acquired knowledge to new data, without the possibility of further learning.

How does all of this relate to our definition of intelligence understood as the ability to acquire new knowledge?

It relates as follows: according to this definition, AI demonstrates intelligence only during the training phase, because it is only during this phase that it genuinely acquires new knowledge.

A major technological challenge is the creation of a model capable of updating its parameters during the inference stage as well. Such a model would represent a significant step toward creating AGI — artificial general intelligence — which is currently the goal of the biggest players in the AI field.

Francois Chollet's Definition and the ARC Test

Francois Chollet is a software engineer specializing in artificial intelligence who has been working at Google since 2012. In 2019, Chollet published a scientific paper titled On the Measure of Intelligence. According to this paper, Chollet defines intelligence as the ability to adapt to a constantly changing environment and respond appropriately in new situations. We will better understand the specifics of this definition and the difficulty AI faces in meeting it when we discuss the ARC test, created precisely for the purpose of measuring this definition.

Intelligence is the efficiency with which you operationalize past information in order to deal with the future.

— Francois Chollet (@fchollet) August 14, 2024

You can interpret it as a conversion ratio, and this ratio can be formally expressed using algorithmic information theory. pic.twitter.com/CvF71Bgk2A

The ARC Test

To examine the extent to which AI meets the above definition and how close it is getting to the level of AGI (artificial general intelligence), the ARC test (Abstraction and Reasoning Corpus) was created.

To explain the usefulness of the ARC test, let me remind you that current artificial intelligences cannot cope with new problems outside their training data, despite extensive training on large datasets. This is a problem that slows progress toward AGI.

What does “new problems outside their training data” mean? Recall my example with the sunflower and the child. That was an example ChatGPT had never seen before, but it handled it well. The catch is that the problem concerned the interaction of objects in the physical world, which the model knows very well. As I mentioned when discussing that example, during training ChatGPT came to understand the laws governing reality and simply applied those laws to solve a new problem. However, this new problem (the sunflower and the child) was a problem set in a world the model knew well. The ARC test works differently.

The ARC test gives models tasks whose solution rules they have never seen before. It is as if ChatGPT had to solve our sunflower-and-child puzzle in a different reality, governed by entirely different and completely unknown laws of physics. ChatGPT would not be able to handle such a task.

Let us see how the ARC test works.

What Do the Tasks Involve and Why Is the ARC Test So Difficult?

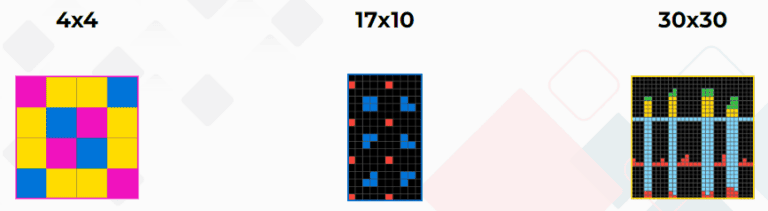

It is worth noting at the outset that solving ARC test tasks requires only basic knowledge — the kind that young children naturally acquire. No specialized knowledge is needed. ARC is a test designed so that anyone can take it, regardless of origin — be it a Martian, a human, or a machine.

Each task consists of grids ranging in size from a minimum of 1×1 to a maximum of 30×30. The cells in the grid are filled with numbers from 0 to 9, each of which is represented by a different color, for a total of ten different colors.

Test participants receive a set of demonstration grids. These serve as examples, based on which participants must construct the next grid during the test. The task is to determine the size of the grid and fill each cell with the appropriate color or number. A grid construction is considered correct only when both the grid size and the color of every cell exactly match the expected answer.

In the example above, the hidden rule — which the average person will easily spot, and you certainly will too — is to fill with yellow all black squares that have green squares adjacent on all sides.

Even when we provide such obviously simple test examples to the model during training, its accuracy in solving them at the inference stage is only 34%, which is a result far below human capability (84%).

So why are these tasks so difficult for current artificial intelligences to solve?

Most of the tasks we perform with AI are accomplished thanks to large amounts of training data and pattern recognition from that data (conceptual understanding). ChatGPT responds brilliantly to our questions because it writes excellently. ChatGPT handles the sunflower-and-child puzzle well because it has an excellent knowledge of the laws of physics from its training data and can apply the appropriate laws to the appropriate problems.

The ARC test tasks are different. Here the tasks involve unique and complex patterns that the model has never seen — or has seen too few of — in its training data.

The test does provide examples for each task, but there are literally only a few, and it turns out this is a woefully insufficient number for the model to detect the rules governing these examples. A teenager can pick up these rules quickly; ChatGPT either cannot do so at all or does so only with great difficulty. It turns out that you cannot train a model to achieve a score close to that of an average, untrained human. In other words, models work well when they have large datasets for training, but ARC challenges models to understand and generalize rules from a small number of examples. Current AI cannot handle this challenge.

The ARC test was therefore designed to measure the level of intelligence understood as the ability to discover, from a few examples, the new rules needed to solve new problems.

The Answer to Our Question Is Non-Binary

The aforementioned score of 34% on the ARC test, which will certainly continue to improve over time, hints at another important aspect of the intelligence discussion. Intelligence is not a binary concept.

It is not the case that one is either intelligent or not. This applies to both humans and computers.

For humans, the level of intelligence is measured by the IQ coefficient. This measurement is performed using various techniques for measuring human intelligence. For computers, the level of intelligence can also be measured in many different ways. Some IQ tests used for humans can also be applied to language models such as ChatGPT, and of course this has been done many times. See, for example, this article describing a study of ChatGPT using the Wechsler Adult Intelligence Scale.

In summary, in my opinion, there is no longer any point in asking whether AI is intelligent. The question should be: what is the level of that intelligence in specific AI activities? In some activities, the level will be zero, while in others it will far exceed the capabilities of human intelligence.

AGI and Intelligence Capable of Scientific Discovery

In various discussions about artificial intelligence, the term AGI, or artificial general intelligence, appears regularly. It is most commonly defined as being capable of performing most human tasks at a human level, or higher. Creating such intelligence would be equivalent to creating an automaton that replaces human labor in most professions (first intellectual ones, and with the proliferation of robots, physical ones as well).

However, replacing human labor is not what the most rigorous commentators expect from AGI. The ultimate proof of the emergence of general artificial intelligence is to be the ability to make scientific discoveries. Here I once again recall the earlier-cited statement by Professor Dragan, who, when defining intelligence, pointed to Newton’s scientific discoveries, in which the brilliant scientist used the ability to find analogies.

Predictions about when such intelligence will emerge vary widely. Some predict it within just a few years, others within several decades. Pessimists cite the lack of reasoning during the inference stage (discussed earlier) and hallucinations — in simplified terms, the generation of false or implausible content — as the main challenges.

Summary -- The Phase Space of Intelligence

As you can see, there can be many definitions of intelligence. Most often they complement each other, or some are broader while others are narrower. There can also be many levels of intelligence development.

The most general definition, encompassing all the others, is Jacek Dukaj’s — the ability to operate on symbols. This definition resonates with me most, partly because I have an irresistible sense that the emergence of life, already at the level of amino acid molecules and later the first cells, is a manifestation of some deep intelligence woven into the very fabric of reality.

To this day, the most brilliant, most intelligent scientists, aided by their most expensive research tools, are unable to understand and replicate the process by which life arose. It is precisely the mystery and incredible complexity of life at the multicellular level that leads me to believe that life itself is intelligent. Our human intelligence may be merely a product of this deeper, more fundamental intelligence that we may never be able to fully comprehend.

Dukaj’s earlier-cited statement also deeply resonates with me, so let me quote it once more in closing:

What is the probability that our understanding of intelligence is the benchmark for intelligence in general? Imagine a phase space in which we have as many dimensions as there are possible variables for constructing intelligence in general, and in that phase space we have one tiny dot, and that dot is the intelligence possessed by Homo sapiens. What is the probability that this is the optimal form of intelligence, that no other form could surpass it? If, after reaching the technological singularity, we allow our artificial intelligence to build successively better versions of itself, then logically, moving through that phase space, it will drift away from Homo sapiens intelligence toward increasingly strange and alien forms of intelligence. Otherwise, we would have to accept that the universe is anthropic — that it was created with precisely the right combination of assumptions, physical laws, elementary particles, speed of light, and so forth, solely so that the human intelligence evolved within it would be optimal. This is a kind of Copernican belief. If we do not subscribe to it, the logical consequence is that human intelligence is a random version of intelligence located on the fringes of the phase space, while the optimum lies somewhere in the center.

And from me — regardless of which definition of intelligence we adopt and to what extent we consider today’s AI to be intelligent, what matters is that we develop and use artificial intelligence to solve humanity’s problems, both large and small.

In the face of demographic decline, and given the fact that most people spend their lives working merely to get by, the automation of work through AI can be a liberation. In the face of greed and the limited power of the human intellect, artificial intelligence can bring an entirely new quality to the management of the resources of companies, nations, and the entire planet.

Amen.

Acknowledgment

I would like to thank Lukasz Chomatek for his editorial assistance. Lukasz helped me catch many various inaccuracies and unclear formulations that inevitably arise when writing such a lengthy text.

I encourage you to follow Lukasz’s profile on X. He shares all sorts of interesting tidbits about AI there. Below is an example from today:

Sztuczna inteligencja a porady finansowe. Doradza dobrze? A może puści Cie z torbami?

— Lukasz Chomatek (@ChomatekLukasz) August 20, 2024

Przetestowalem ChatGPT, Grok i Claude’a na:

– budowaniu oszczednosci

– kredytach konsumpcyjnych

– kredytach hipotecznych

Zobacz gdzie daly rade, a gdzie sie pomylily. pic.twitter.com/dtWWHYZjlj

Screenshot: A tweet by Lukasz Chomatek about testing AI financial advice from ChatGPT, Grok, and Claude on topics like building savings, consumer loans, and mortgages.

While you are at it, you can also follow my profile.

DISCOVER ELEMENT!

Maciej Michalewski

CEO @ Element. Recruitment Automation Software

Recent posts:

The Great Reorg: companies are rebuilding around AI

Foundation Capital interviewed 25 companies and found that AI is not just speeding up work — it is forcing organizations to rebuild from the ground up around four human roles.

Gallup 2026: the workplace is cracking and managers lose drive faster than the rest

Gallup State of the Global Workplace 2026: engagement fell to 20%, managers lose drive faster than their teams, and the AI paradox is clear: personal productivity rises, organizational does not.

A Polish-founded robot that drives screws faster than humans

A Polish-founded robot that drives screws faster than humans When people think about automation, they usually picture chatbots, image generators, and coding assistants. Meanwhile, AI

People prefer AI poetry over Shakespeare

A study published in Scientific Reports (Nature) produced results that should interest anyone who thinks human creativity is easy to tell apart from machine output.

Three tools that measure AI’s impact on the job market

The debate about AI’s impact on the job market has been going on for years, but it only recently moved past the “experts predict” stage.

Poland’s job market: 238,000 offers and the specter of jobless recovery

In February 2026, Polish employers posted 238,000 job offers – 7% fewer than last year. Analysis of the Grant Thornton report and Element CEO commentary.