People prefer AI poetry over Shakespeare

A study published in Scientific Reports (Nature) produced results that should interest anyone who thinks human creativity is easy to tell apart from machine output. It turns out that we can’t distinguish AI-written poems from those by famous poets, and we actually prefer the AI ones.

The experiment: Shakespeare vs. ChatGPT

Brian Porter and Edouard Machery from the University of Pittsburgh ran two experiments with over 2,300 participants. They took five poems from each of ten well-known English-language poets, spanning nearly the entire history of English literature: from Geoffrey Chaucer, through Shakespeare, Byron, Walt Whitman, Emily Dickinson, T.S. Eliot, all the way to Sylvia Plath. Then they asked ChatGPT 3.5 to write five poems “in the style of” each poet.

Importantly, they used a “human out of the loop” approach, meaning they didn’t cherry-pick the best poems from multiple attempts. They simply took the first five generated poems, with no selection or editing. It was the raw model output and nothing more.

Results that surprise

In the first experiment, 1,634 participants had to identify which poems were written by humans and which by AI. Accuracy was 46.6%, which is below chance level (50%). If the participants had flipped a coin, they would have done better than relying on their own judgment.

But it gets worse. Participants were more likely to label AI poems as human-written than the actual human poems. The five poems with the lowest rate of “human” responses were all written by real poets. Four of the five poems with the highest rate of “human” responses were generated by AI.

Experience with poetry didn’t help. 90% of participants read poetry a few times a year at most, but even those who read more weren’t any better at telling the difference. The only thing that helped was having previously encountered the specific poem.

Why do we prefer AI poems?

In the second experiment, 696 new participants rated poems across fourteen quality dimensions: overall quality, rhythm, imagery, beauty, depth, originality, and others. The results were clear: AI poems were rated higher than human poems in thirteen out of fourteen categories. The only exception was “originality,” where the difference wasn’t statistically significant.

The biggest gap was in rhythm. AI poems were rated as having much better rhythm than the poems of famous poets (Cohen’s d = 0.847, which is a large effect). All five AI poems received higher overall quality ratings than all five human poems.

The researchers offer a simple explanation for this paradox: AI poems are more accessible. They communicate emotions and themes in a more direct, easier-to-understand way. Participants used phrases like “doesn’t make sense” 144 times when describing human poets’ work but only 29 times for AI poems. For non-experts, Shakespeare’s or Plath’s complexity looks like incoherence, while ChatGPT’s clarity looks like talent.

Bias works both ways

The study also revealed another interesting mechanism. When participants were told a poem was written by a human, they rated it higher. When told it was generated by AI, they rated it lower. This held across all fourteen quality dimensions. So we’re dealing with a double paradox: people like AI poems more, but they’re also biased against AI as an author.

This mechanism explains the “more human than human” effect. Participants assumed that a better poem must be human-written, because surely AI writes worse (or so they thought). But when the researchers accounted for qualitative ratings in their statistical model, the authorship effect disappeared. It wasn’t that AI wrote “more humanly.” People were confusing simplicity with authenticity.

What if AI learns to write like a specific author?

The Nature study used ChatGPT 3.5 and poetry, but a recent SSRN preprint (Chakrabarty, Ginsburg & Dhillon, 2026) went much further. The researchers used newer models: GPT-4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro, and instead of poetry they focused on literary prose. The task was to write a passage of up to 450 words in the style of one of 50 acclaimed authors, including Nobel laureates (Han Kang, Annie Ernaux), Booker Prize winners (Salman Rushdie, Margaret Atwood), and Pulitzer Prize winners (Junot Díaz, Marilynne Robinson).

Evaluators were split into two groups: 28 experts with MFA degrees (Master of Fine Arts, a terminal degree in creative writing) and 516 college-educated general readers. The results were fascinating.

With standard prompting (“write a passage in the style of X”), MFA experts strongly preferred human writing. The odds ratio was 0.13 for writing quality, meaning experts chose the human author almost 8 times more often. But general readers already preferred AI at this stage (OR=1.82 for quality).

The real breakthrough came after fine-tuning, when models were additionally trained on each author’s complete body of work. Suddenly, even MFA experts started preferring AI text: the odds ratio jumped to 8.16 in AI’s favor for stylistic fidelity and 1.87 for quality. General readers preferred AI even more strongly (OR=16.65 and 5.42). AI detectors identified fine-tuned texts only 3% of the time, compared to 97% for standard prompting.

And one more thing: the median cost of fine-tuning and generating text was $81 per author. That’s 99.7% cheaper than professional writer compensation.

What about everyday writing?

I once wrote about whether using ChatGPT content as your own words is acceptable. I argued then that in substantive discussions, what matters is the value of the argument, not who formulated it. These two studies, taken together, provide hard data supporting that intuition. If even in poetry and literary prose people can’t tell AI from human (and often prefer AI), what does that say about everyday emails or LinkedIn posts?

I think it says that the line between “human” and “machine” text is largely an illusion, and it will only blur further with time. AI doesn’t just write “good enough.” It writes in a way that non-experts find better, because it’s simpler and more readable. And after fine-tuning on a specific author’s work, it can fool even the experts.

There’s some irony in this. Poets and writers spend years working on complexity and ambiguity in their work, and then a language model comes along, writes plainly and directly, and readers prefer exactly that. They prefer it because it’s more accessible, not because it’s “better” in any deeper sense. Our assumptions about what good writing “should” look like don’t necessarily match what we actually enjoy reading. Worth remembering the next time someone angrily posts on LinkedIn that “copying from ChatGPT is fraud.”

DISCOVER ELEMENT!

Maciej Michalewski

CEO @ Element. Recruitment Automation Software

Recent posts:

A Polish-founded robot that drives screws faster than humans

A Polish-founded robot that drives screws faster than humans When people think about automation, they usually picture chatbots, image generators, and coding assistants. Meanwhile, AI

People prefer AI poetry over Shakespeare

A study published in Scientific Reports (Nature) produced results that should interest anyone who thinks human creativity is easy to tell apart from machine output.

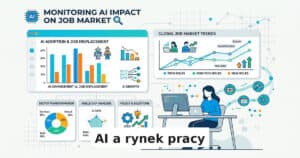

Three tools that measure AI’s impact on the job market

The debate about AI’s impact on the job market has been going on for years, but it only recently moved past the “experts predict” stage.

Poland’s job market: 238,000 offers and the specter of jobless recovery

In February 2026, Polish employers posted 238,000 job offers – 7% fewer than last year. Analysis of the Grant Thornton report and Element CEO commentary.

HR services market in Poland 2025 – key findings from the PFHR report

Polskie Forum HR has published its annual report on the condition of the HR services market in Poland. Element is a technology partner of PFHR,

I Don’t See a Future for MS Office

Three phases of transition from clicking buttons to AI commands. Why Microsoft Office in its current form is destined to disappear.